Referential transparency

Better abstractions

Every program in any programming language in order to be executed is eventually transformed into the language native to machine—bunch of ones and zeros. We, as humans, not that good at reading, writing and understanding programs represented in binary. At the dawn of programming we actually did that but it was clear that it’s too hard, error-prone and not suitable for implementing large programs. We started to seek for abstractions, like opcode mnemonics, functions, classes and objects, all that to help us break down program in smaller pieces and being able to reason about them. Imperative programming with currently dominant OOP paradigm embraces mutability — program is a collection of objects changing over time. It’s almost never possible to look at some class’ method and say what would be the output for given arguments because of mutable nature of objects — member variables, global variables, dependency on other objects state, etc. Functional programming with referentially transparent expressions shows a different way of thinking about the programs, instead of objects and values changing over time — have small composable functions yielding the same output for the same input. It gives the ability to better understand and reason about the code, know exactly what function does just by looking at its signature, be more disciplined about program responsibilities and come up with better orthogonal design.

Expressions vs Statements

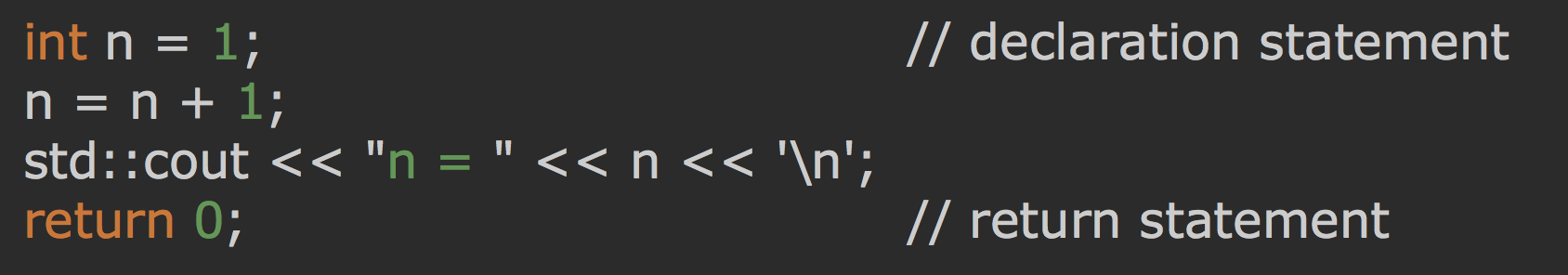

First thing we need to understand is that functional languages operate on expressions rather than statements and referential transparency is all about expressions. Here’s an example of some imperative statements:

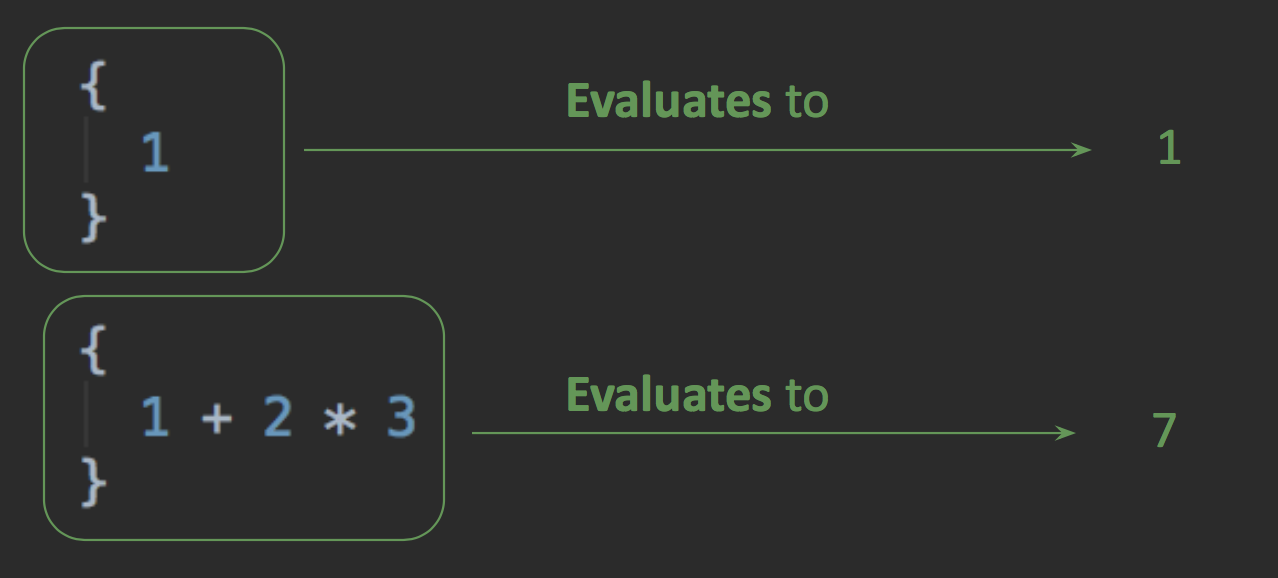

In contrast, expressions are sort of like values that can be evaluated.

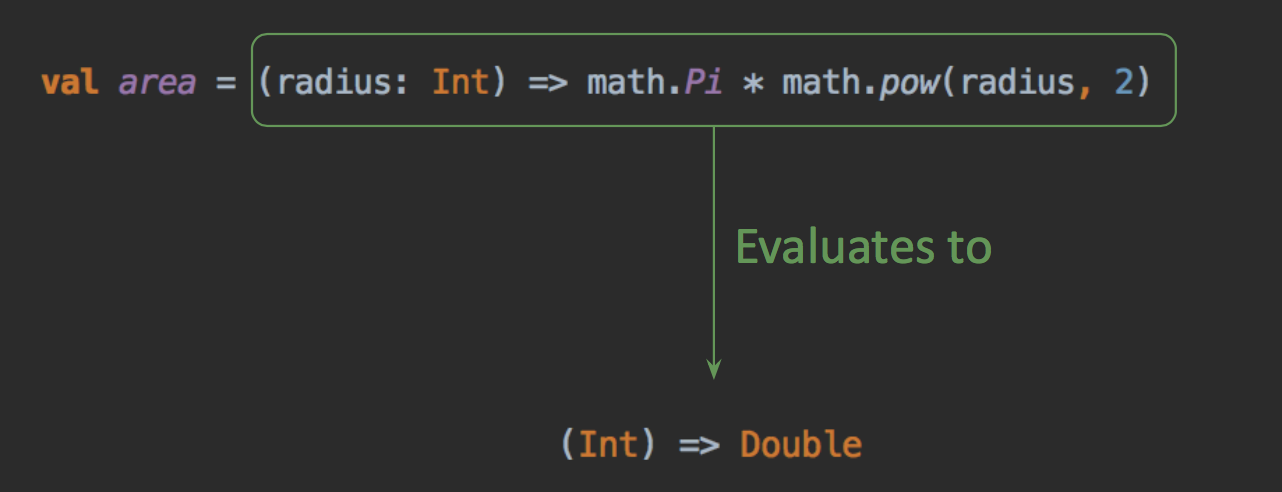

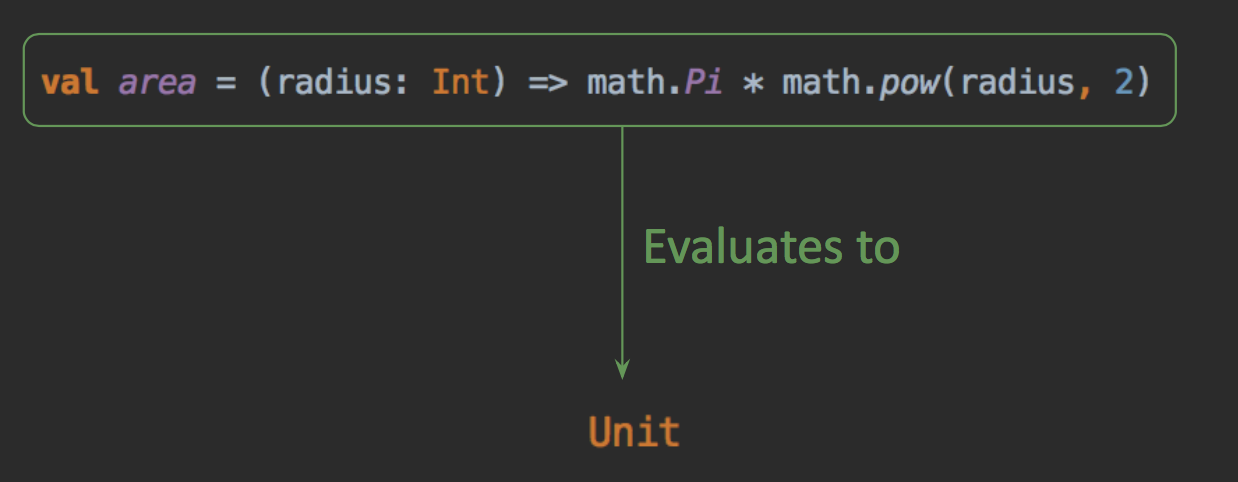

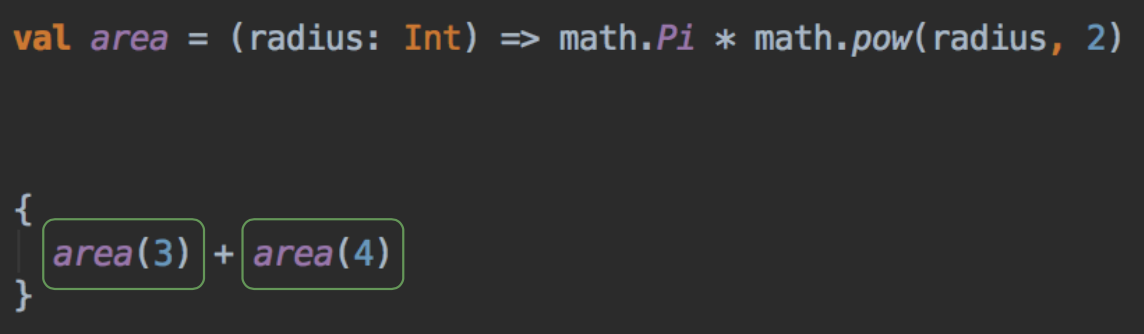

Now let’s see a function declaration that computes an area of a circle:

The expression of areas function body evaluates to (Int) => Double (a function taking an integer and returning double). The inner math.Pi and math.pow(radius, 2) are expressions as well and the thing is: everything is an expression. Even the function definition itself is an expression.

Referentially transparent expressions

Now that we know what expression is let’s see what referential transparency means and why is it an important property:

An expression is referentially transparent if it can be replaced with its value without changing the program’s behavior.

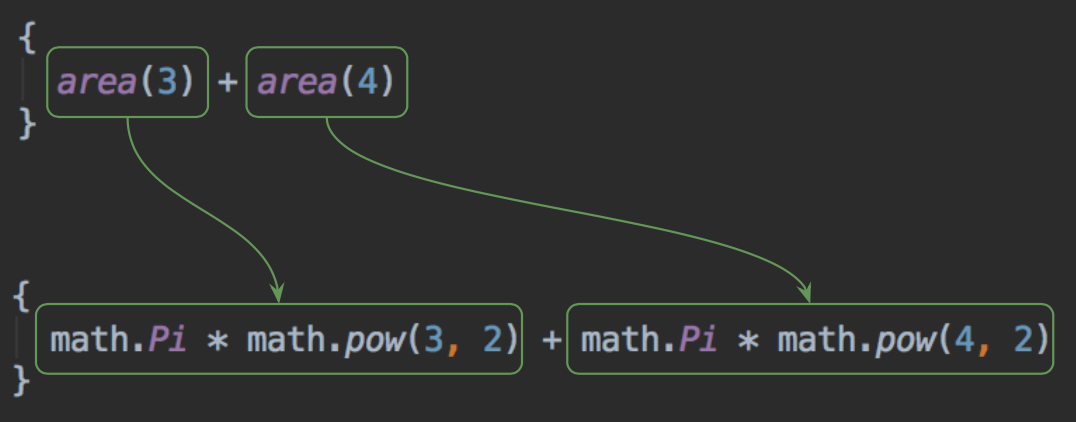

Let’s take our area function as an example. Suppose we have an expression that computes a sum of two circles with radiuses 3 and 4.

If area(3) and area(4) are referentially transparent expressions then we can substitute the function call with the function body and the end result should remain the same:

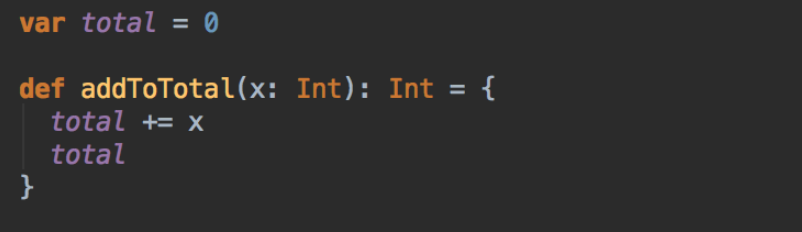

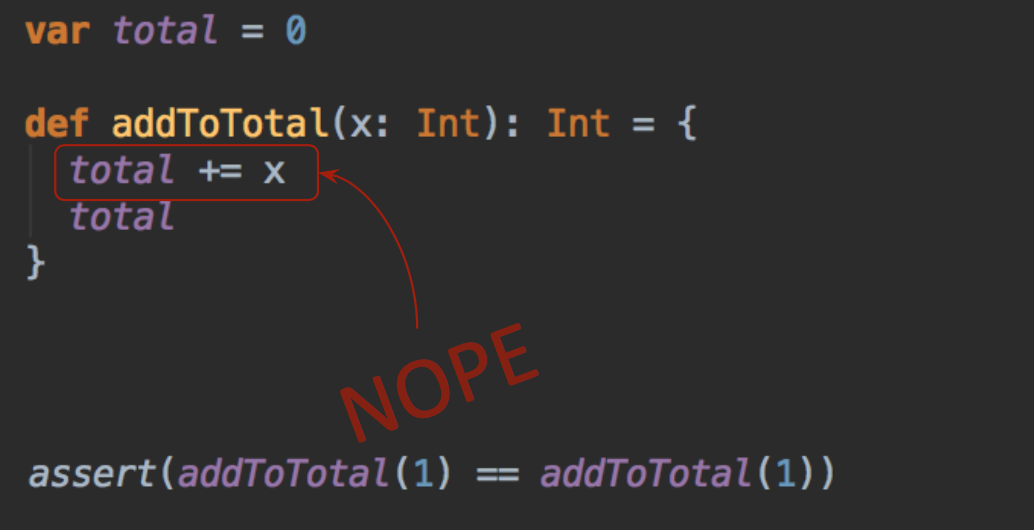

Here’s an example of a function that is not referentially transparent:

Pretty simple function that you expect to see in any imperative language codebase but it’s a big no-no in functional languages. This function have a hidden dependency on a total variable, by doing mutation our substitution model no longer works:

Having this kind of assert failing in a unit test would be surprising. We can’t think about addToTotal as a black box, in case of any problems the “user” of the function has to go inside a function in order to understand what’s going on and it gets even worse with the large code base and deep nesting.

By looking at multiple calls to addToTotal in different places it’s almost impossible to say what value will be returned. Non referentially transparent expressions usually mean some shared state or assignment operator and this is where it gets really tricky when you add concurrency into mix. Remember how is it to spend a week chasing a bug which turns out to be a race condition that happens once a month? Pure functions, on the other hand, depend only on their arguments which makes it easier to mitigate concurrency problems and implement parallelization.

Pure functions?

“Pure function” is a common term in functional world that is tightly related to RT. There are a lot of discussions on purity vs referential transparency, different people have different opinions on what it actually means. To keep things simple let’s blur the distinction and assume that all functions that are referentially transparent are called “pure functions”.

“Conditional” purity

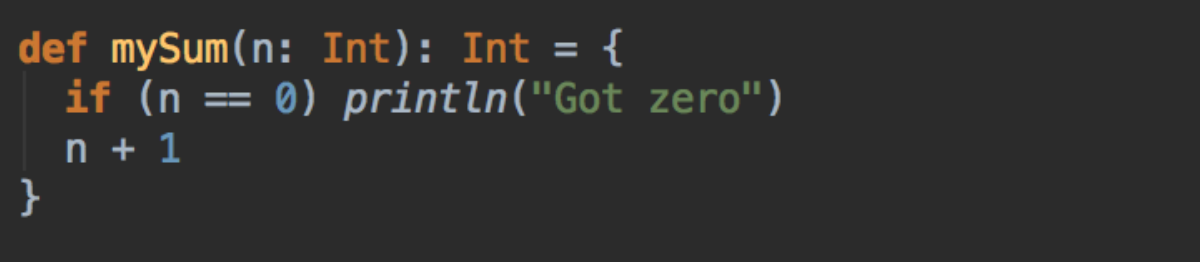

Unlike Haskell, some hybrid programming languages, like Scala, allow programmer to do uncontrolled side effects, basically do imperative programming. Consider the following function:

Our function signature says “give me an Int and I’ll give you an Int back” but it doesn’t say anything about printing to standard out. Is this function referentially transparent? Well, the answer is “it depends”. If I’m only interested in the function result then yes, mySum(42) == mySum(42), always and for all arguments. If I’m also reading the standard output then no, mySum(42) ≠ mySum(0) because there will be an extra line printed on the second call.

Sometimes it’s useful to think about a function as pure even if it strictly isn’t one. This is where programmer has to make a choice and the general guideline is: if your business logic doesn’t depend on side effects of your “pure” function then its okay to treat it as pure, otherwise it will do more harm than good. For example, if you’re writing a command line application that interacts with user via standard in and out then function like mySum should not be considered pure. In many other cases it may be fine to stick some logging message for debugging purposes or what not and still treat a function as pure. The trick is to scope your side effects in a way that it will not be observed by the caller. I’m talking about unclosed file handles, unreleased memory, etc. There is no such dilemma in Haskell though, the language is strict and it forces programmer to reflect any computational effects in function signature.